Here is a cheat sheet for the essential PySpark commands and functions. Start your big data analysis in PySpark. PySpark Cheat Sheet: Spark DataFrames in Python, This PySpark SQL cheat sheet is your handy companion to Apache Spark DataFrames in Python and includes code samples. PySpark RDD/DataFrame collect function is used to retrieve all the elements of the dataset (from all nodes) to the driver node.

Rename the columns of a DataFrame df.sortindex Sort the index of a DataFrame df.resetindex Reset index of DataFrame to row numbers, moving index to columns. Df.drop(columns='Length','Height') Drop columns from DataFrame Subset Observations (Rows) Subset Variables (Columns) a b c 1 4 7 10 2 5 8 11 3 6 9 12 df = pd.DataFrame('a': 4,5, 6.

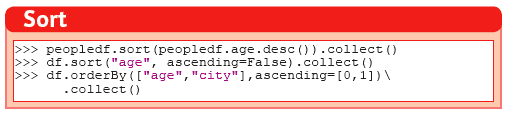

This page contains a bunch of spark pipeline transformation methods, whichwe can use for different problems. Use this as a quick cheat on how we cando particular operation on spark dataframe or pyspark.

This code snippets are tested on spark-2.4.x version, mostly work onspark-2.3.x also, but not sure about older versions. |

Read the partitioned json files from disk

applicable to all types of files supported

Save partitioned files into a single file.

Here we are merging all the partitions into one file and dumping it intothe disk, this happens at the driver node, so be careful with sie ofdata set that you are dealing with. Otherwise, the driver node may go out of memory.

Use coalesce method to adjust the partition size of RDD based on our needs.

Filter rows which meet particular criteria

Map with case class

Use case class if you want to map on multiple columns with a complexdata structure.

OR using Row class.

Use selectExpr to access inner attributes

Provide easily access the nested data structures like json and filter themusing any existing udfs, or use your udf to get more flexibility here.

How to access RDD methods from pyspark side

Using standard RDD operation via pyspark API isn’t straight forward, to get thatwe need to invoke the .rdd to convert the DataFrame to support these features.

For example, here we are converting a sparse vector to dense and summing it in column-wise.

Pyspark Map on multiple columns

Filtering a DataFrame column of type Seq[String]

Filter a column with custom regex and udf

Sum a column elements

Remove Unicode characters from tokens

Pyspark Documentation

Sometimes we only need to work with the ascii text, so it’s better to clean outother chars.

Connecting to jdbc with partition by integer column

When using the spark to read data from the SQL database and then do theother pipeline processing on it, it’s recommended to partition the dataaccording to the natural segments in the data, or at least on an integercolumn, so that spark can fire multiple sql queries to read data from SQLserver and operate on it separately, the results are going to the sparkpartition.

Bellow commands are in pyspark, but the APIs are the same for the scala version also.

Parse nested json data

This will be very helpful when working with pyspark and want to pass verynested json data between JVM and Python processes. Lately spark community relay onapache arrow project to avoid multiple serialization/deserialization costs whensending data from java memory to python memory or vice versa.

So to process the inner objects you can make use of this getItem methodto filter out required parts of the object and pass it over to python memory viaarrow. In the future arrow might support arbitrarily nested data, but right now it won’tsupport complex nested formats. The general recommended option is to go without nesting.

'string ⇒ array<string>' conversion

Type annotation .as[String] avoid implicit conversion assumed.

A crazy string collection and groupby

This is a stream of operation on a column of type Array[String] and collectthe tokens and count the n-gram distribution over all the tokens.

How to access AWS s3 on spark-shell or pyspark

Most of the time we might require a cloud storage provider like s3 / gs etc, toread and write the data for processing, very few keeps in-house hdfs to handle the datathemself, but for majority, I think cloud storage easy to start with and don’t needto bother about the size limitations.

Supply the aws credentials via environment variable

Supply the credentials via default aws ~/.aws/config file

Recent versions of awscli expect its configurations are kept under ~/.aws/credentials file,but old versions looks at ~/.aws/config path, spark 2.4.x version now looks at the ~/.aws/config locationsince spark 2.4.x comes with default hadoop jars of version 2.7.x.

Set spark scratch space or tmp directory correctly

This might require when working with a huge dataset and your machine can’t hold themall in memory for given pipeline steps, those cases the data will be spilled overto disk, and saved in tmp directory.

Set bellow properties to ensure, you have enough space in tmp location.

Pyspark doesn’t support all the data types.

When using the arrow to transport data between jvm to python memory, the arrow may throwbellow error if the types aren’t compatible to existing converters. The fixes may becomein the future on the arrow’s project. I’m keeping this here to know that how the pyspark getsdata from jvm and what are those things can go wrong in that process.

Work with spark standalone cluster manager

Start the spark clustering in standalone mode

Pyspark Dataframe Cheat Sheet Pdf

Once you have downloaded the same version of the spark binary across the machinesyou can start the spark master and slave processes to form the standalone sparkcluster. Or you could run both these services on the same machine also.

Standalone mode,

Pyspark Dataframe Cheat Sheet Example

Worker can have multiple executors.

Worker is like a node manager in yarn.

We can set worker max core and memory usage settings.

When defining the spark application via spark-shell or so, define the executor memory and cores.

When submitting the job to get 10 executor with 1 cpu and 2gb ram each,

| This page will be updated as and when I see some reusable snippet of code for spark operations |

Changelog

Pyspark Read Parquet

References

Go Top